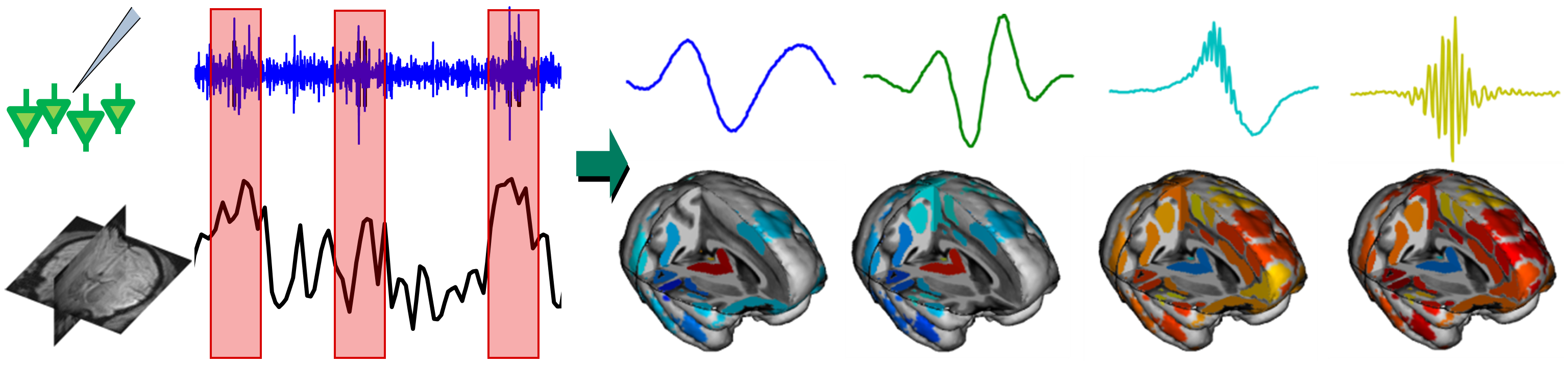

Left: example of LFP signal (blue) and concurrent fMRI activity (black), repetitive dynamical patterns appear in red blocks. Right: learned dictionary of dynamical patterns, together with their brain-wide signature.

Mammalian brains efficiently combine perception, decision making, and motor commands in hundreds of milliseconds. Machine learning can help understand these fascinating distributed information processing capabilities.

A first application of machine learning algorithms to neuroscience is the extraction of information from complex brain signals, focusing on their dynamical aspects. This includes the design of non-parametric statistical dependency measures for time series using an implicit mapping in a Reproducing Kernel Hilbert Space to capture complex non-linear dependencies between brain rhythms [ ], as well as dictionary learning techniques that automatically identify the transient dynamical patterns in ongoing Local Field Potentials (LFP) of a given brain structure that have an impact on the activity of the whole brain [ ].

A second objective is to infer causal statements about the organization of underlying neural mechanisms. One key application is the estimation of the direction and strength of information flow across brain networks. We first studied the communication between multiple locations of the visual cortex using an information theoretic measure of Granger causality, Transfer Entropy, computed between LFP signals during visual stimulation [ ]. Our results suggest the presence of waves propagating along the cortical tissue along the direction of maximal flow of information, routing visual information across the visual cortex [ ]. We also study novel causality principles proposed by our department as an alternative to the Granger causality framework widely used in neuroscience. In particular, we developed a new causal inference method for time series based on the postulate of Independence of Cause and Mechanism. We provided theoretical guaranties for this approach, which outperformed Granger causality in experimental LFP signals [ ].

Causal inference techniques can also be used to assess which aspect of brain activity affects the behavioral outcome of an experiment. Causal terminology is often introduced in the interpretation of neuroimaging data without considering the empirical support for such statements. We investigated which causal statements are warranted and which ones are not supported by empirical evidence [ ]. Our work provides the first comprehensive set of causal interpretation rules for neuroimaging results.

Beyond providing powerful data analysis techniques, machine learning can help understand brain function from a theoretical perspective. We investigated a standard model of neuronal adaptation -- spike-timing dependent plasticity -- in this way and showed it can be viewed as a stochastic gradient descent of a neuromodulatory reward function implementing a form of empirical risk minimization [ ]. These results help understand optimality properties of biological learning.